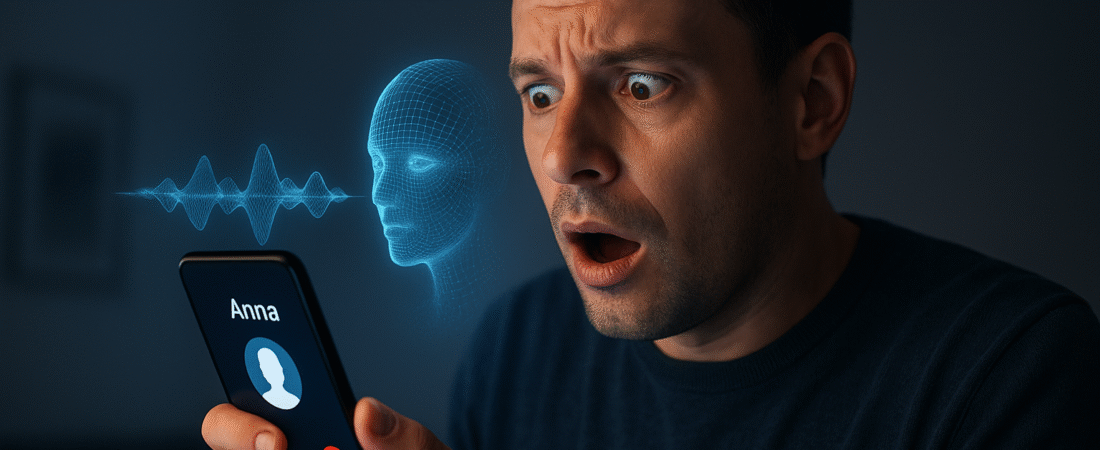

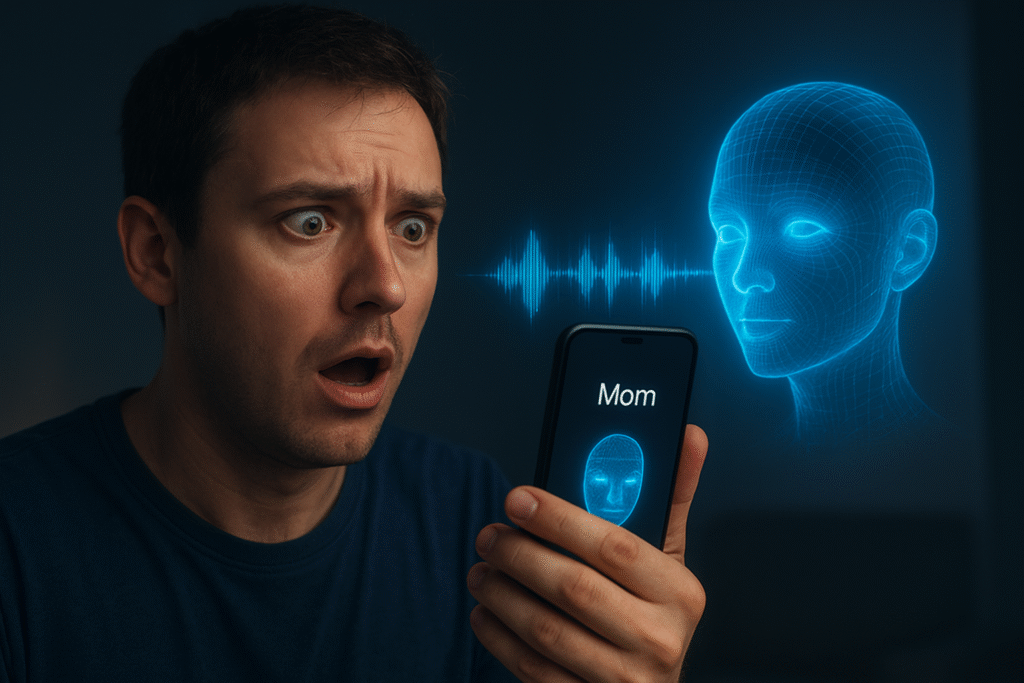

Just try to imagine receiving a phone call from your daughter or brother in a complete state of panic, screaming for help that they have been in an accident, their phone battery is about to die, and they urgently need money. You can hear their voice, their mood, their desperation, but what if their voice is not real? Globally, a wave of AI voice cloning scam calls is taking advantage of our trust in the sound of the voices of people we love. In such cases, thieves do not require the hacking of your email or stealing of your login credentials; they only need a few seconds of a person’s speech which they can find on social media or YouTube to make a convincing clone. After they have duplicated a person’s voice, the fraud continues to be effective right away, usually at the time of the night when their victims are most vulnerable.

Why it is Important to Know about AI Voice Cloning Scam

The AI voice cloning scam technology is very similar to the one that is used for virtual assistants, text-to-speech operations, and creative applications. Voice synthesis algorithms can adopt and replicate the voice, accent, and even the feeling of a person if they get just ten to fifteen seconds of the person’s voice. The 2024 Wired investigation reveals that AI voice technology which was resourced for entertainment and accessibility has been turned to by the bad guys for fraud. Several cases have been reported by the BBC and Forbes wherein families wire transfer large sums of money believing that they are helping their loved ones.

In a single American case, a father remitted $15,000 after he was informed that the deepfake voice of his son was asking for money for bail. Police officials, in their public calls, have urged people to watch out for deepfake call fraud scams. Such frauds constitute only a small fraction of the larger AI impersonation scam wave, wherein scammers employ fabricated voices, faces, or even videos to deceive people into giving money or manipulating them psychologically.

Impact of AI Voice Cloning on People and their Acceptance of technology

Such deception has an awful effect on both an economy and the victims’ minds. The victims not only throw their money away but also go through a huge mental trauma. They particularly get very upset that a familiar and quite intimate thing like their loved one’s voice was used against them. A Forbes interview with the victims of family emergency scam revealed that most of them felt guilty or ashamed when they realized that they had been tricked, although the fraud was almost impossible to detect. Elderly people are identified to be the ones at highest risks due to the lack of understanding of technology.

The elderly have been on the receiving end the most. Grandparents have been the target of scammers for a very long time, and scammers have been using videos of grandchildren from social media to make their cloned voice fraud calls more authentic.

The moment panic takes over is also the moment reason disappears, and impostors, therefore, take the fullest advantage of the situation. On the societal level, this menace to trust, which is the basis of mankind, is slowly but surely destroying that trust.

If anyone’s voice can be imitated, doubt comes very easily, and trust in real calls for help decreases. Experts believe that this loss of authenticity can change the way we verify identity, respond to emergencies, and keep to the ethical side in digital communication.

How to Detect AI Voice Cloning Scam Call

The AI voice cloning scam method by which the scammer achieves their goal is pretty much terrifying in their simplicity yet nicely effective. They start the operation by downloading short voice samples from social media platforms, YouTube, or wherever they’ve found people talking in the public domain. Just with a few seconds of a clear audio, they use a high-tech AI tool to copy the person’s voice pattern, tone, and even the emotional part of the speech. The fake voice can be used to say whatever the scammer wants, and it sounds as if the real human did it. After pretending to have the voice, fraudsters ring from a phony number which is very similar to that of a family member’s.

The counterfeit voice goes through a detail fully staged script: a car crash, arrest, or kidnapping is always a moment of terror asking for money. Scammers are so that the victim will act quickly and will not contact anyone else. Fear dominates over reason and some victims send money through wire, cryptocurrency, or in the form of prepaid gift cards without thinking. The emergency money call scam is considered by the so-called experts the most dangerous one because of the emotional urgency factor. The voice is familiar and therefore, the victim’s disbelief is at a minimum. Typically, victims state that they had the experience; however, upon reporting it, they find it was terribly real, only to discover minutes later that the loved one was safe after all. By that time, the scammers have left with no record left behind.

Public and Media Reaction

Cases of AI voice cloning scam have been so widespread that they have become the main topic of discussion for global media. Media channels compare the procedure with the phishing days, but this time, the fraud is by ear and is your closest-person-type. The BBC investigative team, in their experiment, showed how quickly they were able to clone the voices of journalists and hence, the recordings were nearly indistinguishable from the original ones. In a separate article, Wired was able to duplicate the voice of a reporter, use it in a simulated call, and even close colleagues were tricked into believing it was real. Just a few days ago, government authorities are paying attention to this problem.

Both U.S. Federal Trade Commission (FTC) and Indian Cyber-crime Coordination Centre (I4C) have announced their concerns about deepfake call fraud and AI impersonation scam cases respectively.

In their warnings, they urge people to keep a lookout for being emotionally manipulated and asked for instant money transfers. There is no regulation to help here.

The pace of AI innovation is much faster than the pace at which laws are being developed, and thus, citizens are the first line of defense and are left without any protection.

Technology experts along with ethicists are asking AI developers to include the feature of watermarking, an embedded signal that can help identify if a piece of audio is artificially generated.

These innovative ways are still at their very early stages and are not yet available to the public.

Hence, human vigilance and verification are the safest methods that can be relied upon.

Steps to Take if Scammed by AI Voice Fraud

While the AI voice cloning scam is quite complicated, it can still be stopped by being watchful, doubting, and having a conversation about the future. Families should set up verification methods and have a plan ready in case they receive a crisis call. One smart way is to create a code word for the family which only a few trusted members know. If a caller says he is in trouble and asks for that word, it will definitely reveal a fraudster. The easiest way is to disconnect the call and dial the person from the saved number, not the one that was used to call previously.

There is also a suggestion to call another family member or a friend to confirm the caller’s safety before giving the money. Make people more aware. For example, inform elderly people and those who are not very familiar with technology, that they may receive phone calls of family emergency scam in order to scare or trick them. This alone can have the effect of greatly limiting the harm. Speak of AI-facilitated deception as openly as phishing or password security. Don’t scare people; let them be ready.

Fake callers try to cheat you by scaring you. Keep your cool and remember that even in an emergency situation, you are still allowed to check it out. Making it difficult for bad guys to get voice samples by not giving out a lot of your personal audio especially kids’ videos or private conversations is a good way of protecting yourself. People who hide their Caller ID, use spam filters and cybersecurity software that can detect new numbers or incidents can also be their shields. In case you had a how to spot AI voice cloning scam call incident, please report it at once to the local cybercrime department or the police authority in your area. The sooner victims report, the more time the police have to identify trends and alert others.

Victims should also comply with the steps to follow if victim to AI voice fraud instruction by contacting their bank, filing the report, and getting in touch with national fraud hotlines. The AI voice cloning scam is a next-generation cybercrime that is very deceptive in that it uses sound to make the victim emotionally manipulates in a human way. The sound of family or trust, which used to be comforting, can now be produced and used as a weapon within a few minutes. The emotional manipulation involved is the only argument that the threats of technology are psychological in nature and not technical. Artificial intelligence has mixed up reality with simulation, but human consciousness can still draw that line.

Do not rush into action, wait, check, and double-check if there is an emotional or desperate request for money. The voice you hear might be the most real one, but that does not always mean that the call is genuine. Morality is still a fundamental but essential one: always check if it is really a need before you give money even if it seems to be a familiar voice. AI can imitate a person’s tone, feeling, and identity but not lie or have empathy. Being educated, alert, and communicative not only protects your money but also the glue that keeps families together in the era of digital dependency.